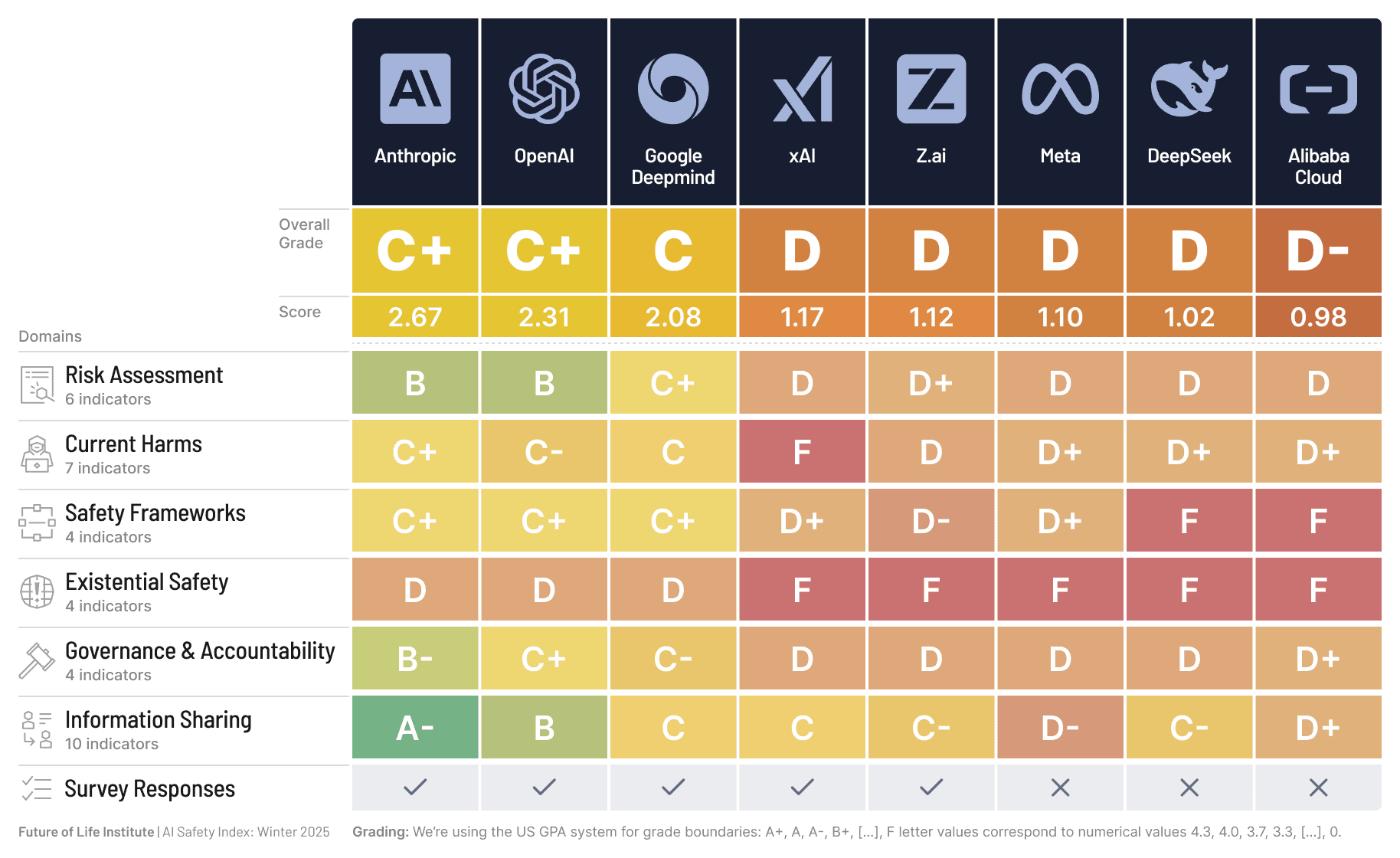

Research by the Future of Life Institute. The most comprehensive independent assessment of AI company safety practices to date. Read the full report.

The best overall grade is a C+.

No company scored above D on existential safety. Every company scored below C on at least one critical domain.

What this means for you

- Risk Assessment

- Identifying and evaluating risks before deploying models.

- Before using an AI tool, look for information about known risks for your application.

- Current Harms

- Addressing bias, misuse, and misinformation in current products.

- These harms are happening now, not hypothetically. See real incidents.

- Safety Frameworks

- Structured safety processes, red-teaming, and testing protocols.

- Look for third-party audits, not self-assessments. A company's safety page is marketing until someone else verifies it.

- Existential Safety

- Plans for catastrophic or irreversible AI risks.

- No company scored above a D here. The hardest problems are getting the least attention. If this concerns you, talk about it. Awareness is the first step toward accountability.

- Governance and Accountability

- Independent oversight, not just internal review.

- Check whether your AI provider has an independent safety board.

- Information Sharing

- Publishing safety research and incident reports.

- Compare what companies publish about their safety testing. The ones sharing the least usually have the most to hide.